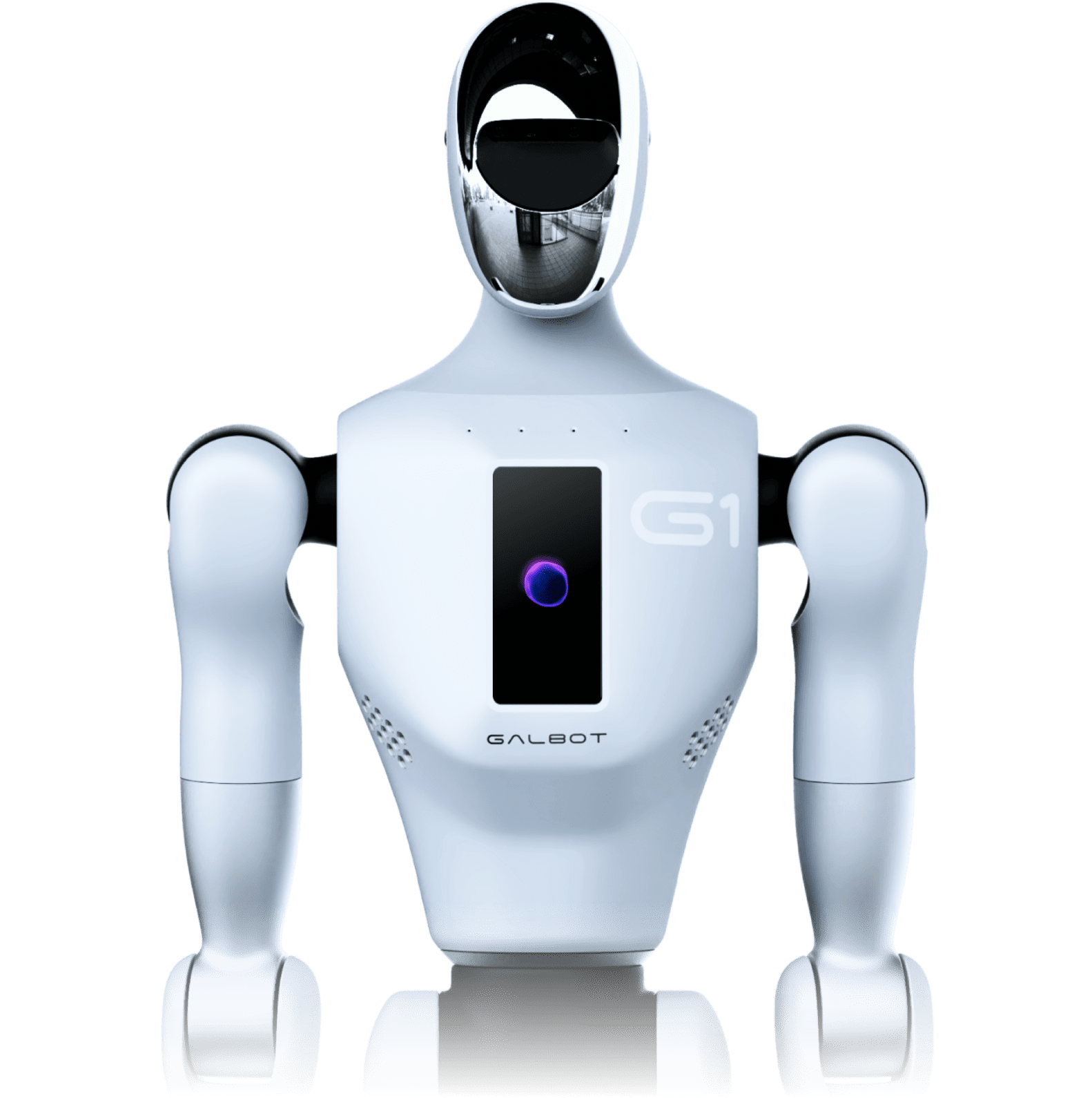

NForge v1.2.0 introduces two groundbreaking capabilities that push Galbot beyond reactive therapy into anticipatory understanding and experiential reconstruction. These features represent a fundamental shift: the robot no longer just responds to what you say — it reconstructs what you experienced and understands what you need before you ask.

✨ The Next Frontier

Dream Replay reverse-decodes brain activity into vivid experiential narratives that Galbot can narrate aloud. Silent Communication reads micro-expressions, posture, and breathing to predict your needs without a single word. Together, they make Galbot the most perceptive companion robot ever built.

Dream Replay

Feed a sequence of cortical predictions (or real fMRI recordings) back through NForge in reverse to reconstruct the sensory experience that produced them. The DreamDecoder neural network maps ~20,484 cortical vertices back to visual (1280-d), auditory (1024-d), and semantic (3072-d) feature spaces via a shared trunk with modality-specific heads. Temporal smoothing (exponential moving average, α=0.3) ensures coherent frame-to-frame transitions. The NarrativeGenerator then converts reconstructed embeddings into evocative, human-readable descriptions — mapping activation intensity to a poetic lexicon ranging from "faint whispers" to "thundering crescendos". Galbot narrates peak emotional moments aloud, complete with matched facial expressions and gestures.

Silent Communication

Galbot learns to understand you without words. The system fuses three real-time perception streams: MicroExpressionDecoder (468 MediaPipe face mesh landmarks → 7 micro-expressions including brow furrow, lip press, jaw clench), PostureAnalyzer (33 body keypoints → 6 posture classifications), and BreathingMonitor (chest displacement zero-crossing → BPM estimation). An IntentPredictor evaluates pattern rules against a rolling signal history to predict 8 possible needs (comfort, space, engagement, reassurance, silence, stimulation, rest, connection) with urgency scoring. The SilentDialogue session manager tracks rapport quality over time — measuring whether Galbot's proactive responses consistently lead to positive follow-up signals.

| Dream Replay Pipeline

| Silent Communication Pipeline

$ Quick Start — Dream Replay

from nforge.robotics import EmotionEngine, GalbotBridge

# Build the pipeline

decoder = DreamDecoder()

narrator = NarrativeGenerator(EmotionEngine.from_hcp_labels())

session = DreamReplaySession(decoder, narrator, GalbotBridge())

# Replay a brain recording

report = session.replay(predictions, timestamps)

print(report.narrative)

# "A vivid scene unfolds — bright colours and sharp details...

# gradually, a warm voice emerges, rich and resonant..."

$ Quick Start — Silent Communication

MicroExpressionDecoder, PostureAnalyzer, BreathingMonitor,

IntentPredictor, SilentDialogue, EmotionEngine, GalbotBridge

)

# Assemble the perception stack

dialogue = SilentDialogue(

expression_decoder=MicroExpressionDecoder(),

posture_analyzer=PostureAnalyzer(),

breathing_monitor=BreathingMonitor(),

intent_predictor=IntentPredictor(EmotionEngine.from_hcp_labels()),

bridge=GalbotBridge()

)

# Feed live sensor data — no words needed

intent = dialogue.observe(

face_landmarks=face_tensor,

body_keypoints=pose_tensor

)

if intent:

action = dialogue.act(intent)

# Galbot responds proactively